Learn AWS Toolkit with Asp.net core deployment

This AWS tutorial is for beginner, who wants to learn AWS without having any previous cloud experience!

Assuming you already know Asp.net Core development!

Here we will be using Asp.net Core in all examples, things to learn:

- What is IAM user, creating and setting permission from console account.

- How to deploy your asp.net application in Aws elastic container using Visual Studio tool kit.

- Brief examples of working with DynamoDB using asp.net core and pushing on AWS

- Working with Amazon S3 from asp.net core application

- How to work with Amazon SQS

First log into your AWS Console, create one if you don’t have, they offer one year free service for many utilities

From your root user account, you need to create one IAM user with credential, so we can use that account to connect from your application, and perform all activities.

After creating AWS account, IAM user and S3 credentials, add all details in your appsettings json file.

"AWS": {

"Profile": "ProfileDefault",

"Region": "US-East-1"

},

"AwsIAMCredentials": {

"AccessKey": "your-access-key",

"SecretKey": "your-secret-key"

},

"AwsS3": {

"AWSBucketPrivate": "your-private-bucket-name",

"AWSBucketPublic": "your-public-bucket-name"

}

We will be retrieving above keys in various places in our application to interact with AWS services!

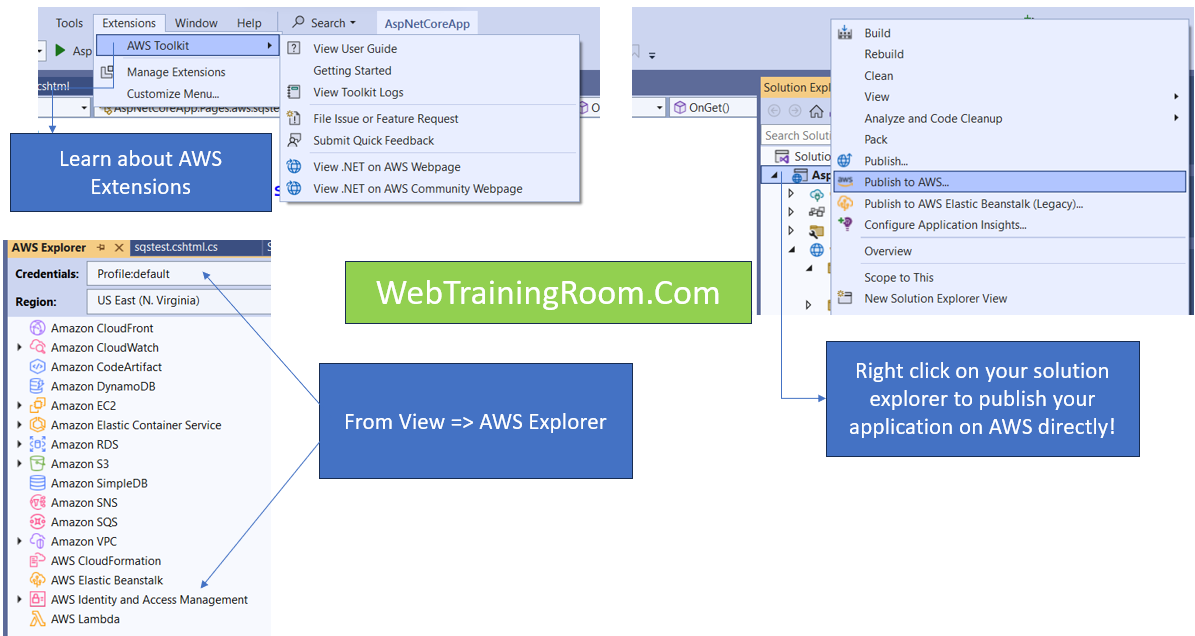

AWS deployment using AWS toolkit for Visual Studio

First you need to download AWS toolkit for visual studio extension and install it in your machine, after installation you will be able to see the AWS explorer integrated with your solution explorer in your project

While connecting to AWS explorer, make sure you provide IAM user credentials.

To publish your asp net application on AWS, just right click on solution explorer and "Publish to AWS", your application will be deployed on "AWS Elastic Beanstalk". all files and images will go under S3 in form of a ZIP file.

While publishing for the first time, you may get some user role permission related error, as you are using some IAM user credential for deployment, so IAM user needs following role given to that user, so it can execute events on different component in your AWS console.

As you can see in your AWS explorer, there are different components are being accessed using the same IAM user credential, but to execute any events on each of them we need different role permission.

# Add following roles to the IAM user you just created. # You can add role directly to user or can create a group, add role to the group then assign the group to user. AWSAppRunnerFullAccess AmazonS3FullAccess AmazonDynamoDBFullAccess

To work with DynamoDB, we need to create a DynamoDB client using IAM credentials.

using Amazon.DynamoDBv2.DocumentModel;

using Amazon.DynamoDBv2;

using Amazon.DynamoDBv2.DataModel;

using Amazon.DynamoDBv2.Model;

var _awsCredentials = _config.GetSection("AwsIAMCredentials");

AmazonDynamoDBClient client = new AmazonDynamoDBClient(_awsCredentials["AccessKey"], _awsCredentials["SecretKey"]);

Now we use the above client object to read, write and delete data from dyanamodb database

Remember, DynamoDB is a no-sql database, so there is no relationships among tables, everything is stored as document in JSON format. [DynamoDB is just like MongoDB atlas cloud (I find mongodb is better no-sql than dynamodb, but dynamodb is less expensive and more efficient as per AWS documentation)]

Here is an example of how we can use IAM credential with AmazonDynamoDBClient to read and delete data from DynamoDB database.

Select all student list from "tbStudent" object.

IConfiguration _config;

IDynamoDBContext _context;

// new instance created through DI

public showStudentsModel(IDynamoDBContext context, IConfiguration configuration)

{

_context = context;

_config = configuration;

}

Table table = Table.LoadTable(client, "tbStudent");

var scanOps = new ScanOperationConfig();

var results = table.Scan(scanOps);

List data = await results.GetNextSetAsync();

IEnumerable students = _context.FromDocuments(data);

List allStudents = students.ToList();

To delete data, we need to pass criteria as object properties (just like where clause), matching data will be deleted.

Table table = Table.LoadTable(client, "tbStudent"); var item = new Document(); item["Id"] = Id; item["Firstname"] = ""; item["Lastname"] = ""; item["Email"] = "@gmail.com"; await table.DeleteItemAsync(item);

In above example I am reading IAM user credential from “appsettings.json” file to execute on a dynamodb object.

Upload your document / images in AWS S3 from Asp.Net Core

In AWS S3, we can create public bucket, private bucket and restricted bucket with different type of permission, when we publish our application using “AWS Elastic Beanstalk", one public bucket is created by host service, where all the files and images are uploaded in a zip file.

Now we create a bucket manually and upload images from our asp.net core application.

Create a form in your razor page

<form method="post" enctype="multipart/form-data">

<input type="file" asp-for="UploadFile" />

<div style="padding:15px;">

<button asp-page-handler="UploadToAwsS3">Upload to S3</button>

</div>

</form>

We need to create a AmazonS3Client instance, check the above code, How dependency injection done through constructor!

var credentials = new BasicAWSCredentials(_awsCredentials["AccessKey"], _awsCredentials["SecretKey"]); using var client = new AmazonS3Client(credentials, Amazon.RegionEndpoint.USEast1);

Then we need create a object of TransferUtilityUploadRequest, and set all file, folder related information.

finally call transferUtility.UploadAsync(uploadRequest); to send the file in AWS S3 server.

Here is the code for uploading document in S3 server.

using Amazon.S3.Transfer;

using Amazon.S3;

public async Task<IActionResult> OnPostUploadToAwsS3()

{

string _bucketName = "s3bucket1aarindam";

string _foldername = "productimages";

string _fileName, _fileContentType;

if (UploadFile != null)

{

_fileName = UploadFile.FileName;

// clean the file name

_fileName = _fileName.ToLower().Replace(" ","-");

_fileContentType = UploadFile.ContentType;

}

else

{

throw new Exception("no fie has been selected");

}

var _awsCredentials = _config.GetSection("AwsIAMCredentials");

string _actionMessage = "";

try

{

var credentials = new BasicAWSCredentials(_awsCredentials["AccessKey"], _awsCredentials["SecretKey"]);

try

{

var uploadRequest = new TransferUtilityUploadRequest()

{

InputStream = UploadFile.OpenReadStream(),

Key = $"{_foldername}/{_fileName}",

BucketName = _bucketName,

//FilePath= _fileName,

CannedACL = S3CannedACL.PublicRead,

};

// initialise client

using var client = new AmazonS3Client(credentials, Amazon.RegionEndpoint.USEast1);

// initialise the transfer/upload tools

var transferUtility = new TransferUtility(client);

// initiate the file upload

await transferUtility.UploadAsync(uploadRequest);

_actionMessage = $"{_fileName} has been uploaded sucessfully";

}

catch (AmazonS3Exception ex)

{

_actionMessage = ex.ToString();

}

}

catch(AmazonS3Exception ex)

{

_actionMessage = ex.ToString();

}

return Redirect("readfroms3");

}

Try changing the permission CannedACL = S3CannedACL.PublicRead

by settig different enum value of S3CannedACL, this value indicates who can access the document you upload.

Reminder, make sure you have given AmazonS3FullAccess right to your IAM user.

Let's learn how to use Azure Cloud Storage system from Ap.net core c# application.